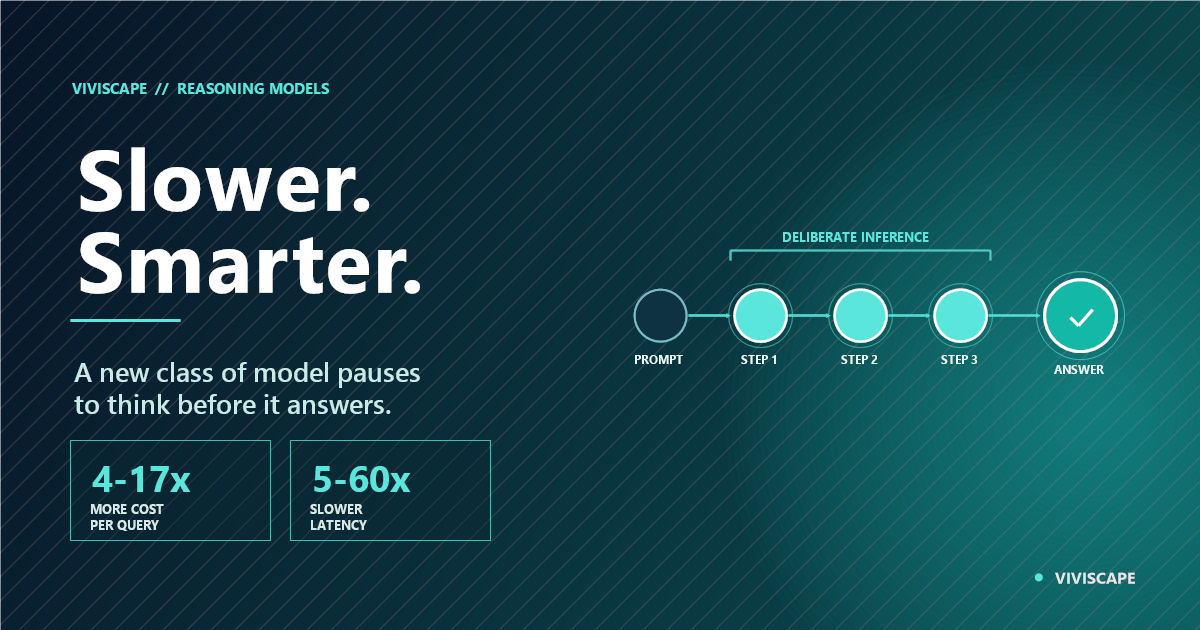

Enterprise AI has a new variable that most organizations are not accounting for. In 2025, a distinct class of AI model entered production: systems that pause before responding, working through problems step by step before producing an answer. These reasoning models — OpenAI's o3, Claude with extended thinking, Gemini's Deep Think mode, and DeepSeek's R1 variants — produce meaningfully better outputs on complex tasks. They also cost 4 to 17 times more per query and respond 5 to 60 times more slowly.

Most enterprises evaluating AI are treating this as a model selection question. It is not. It is an architectural question. Getting it wrong will either leave significant AI capability on the table or produce budget overruns that derail entire programs — often before the organization understands what happened.

What Reasoning Models Actually Do

Standard large language models respond to inputs using a single forward pass through their neural network. The model processes the input in one step and produces output. This is fast — often under a second — and sufficient for most tasks: summarizing documents, drafting communications, classifying content, answering factual questions.

Reasoning models take a different approach. They use additional compute at inference time — called test-time compute — to work through problems iteratively before producing a final answer. The model generates intermediate reasoning steps, evaluates them, refines its approach, and arrives at an answer through a deliberate chain of thought rather than a single pass. This is why they are slower and more expensive: they are doing more work.

The practical result is significant on specific task types. On complex multi-step problems — legal analysis, code review, multi-document synthesis, compliance verification — reasoning models consistently outperform standard models by 8 to 22 percentage points on quality benchmarks. On straightforward tasks, the quality improvement is minimal and the cost premium produces no proportionate return.

That distinction — meaningful capability gains on hard problems, marginal gains on routine tasks — is the entire basis of the enterprise reasoning model decision.

The Cost-Latency Reality Enterprises Are Discovering

The cost structure is not theoretical. OpenAI's o3 at high reasoning effort runs 17 times more per query than standard GPT-4o. Claude's extended thinking adds significant token cost for the thinking process itself. Even at the more economical end — medium reasoning effort on newer efficient models — costs run 4 to 5 times standard inference.

Latency has improved significantly since these models launched. Early reasoning models took 30 to 120 seconds per query. Current implementations complete most enterprise tasks in 3 to 15 seconds. High-effort reasoning on genuinely complex problems can still reach 30 to 60 seconds. For workflows requiring sub-2-second user experience, this remains a disqualifying constraint regardless of quality improvements.

The enterprise math is straightforward. Routing 100% of queries through a reasoning model at typical usage volumes inflates AI compute costs by 4 to 17 times. For a program spending $50,000 monthly on AI infrastructure, that is $200,000 to $850,000 per month for the same volume. No business case supports uniform reasoning deployment.

But routing 20% of queries — the complex, high-stakes decisions that genuinely benefit from deeper reasoning — through reasoning models while handling the remaining 80% on standard inference produces a 10:1 cost advantage over uniform deployment, with quality improvements concentrated exactly where they produce value.

Where the Reasoning Premium Pays Off

Three categories of enterprise work consistently justify the reasoning model cost.

Multi-Document Synthesis and Compliance Analysis

Legal, regulatory, and compliance workflows that require reconciling information across multiple documents, identifying conflicts or gaps, and producing accurate structured outputs are precisely where reasoning models excel. A standard model analyzing a 200-page regulatory filing against a company's compliance posture will miss cross-document implications that a reasoning model works through systematically. The cost of a compliance error — remediation, regulatory exposure, reputational damage — dwarfs the reasoning model premium by orders of magnitude.

Complex Code Review and Architectural Analysis

Software delivery workflows requiring analysis of code interactions across large codebases, identification of security vulnerabilities, and architectural refactoring recommendations show consistent quality improvements with reasoning models. When a wrong answer means a security vulnerability or a production outage, the economics clearly favor the more capable model. The agentic coding transformation reshaping software development leans heavily on reasoning capabilities for exactly these high-stakes analytical tasks.

High-Stakes Decision Support

Fraud detection, contract analysis, financial modeling, and strategic option evaluation — decisions where a wrong output carries significant downstream consequences — justify both the added latency and the cost premium. The calculus is direct: when the cost of a wrong answer exceeds the reasoning model premium by a significant multiple, the economics favor the more thorough system.

On routine tasks — summarization, classification, drafting, question answering — standard models are sufficient. Applying reasoning models to these workflows adds cost without proportionate value and is one of the most common budget leaks in enterprise AI programs.

The Routing Architecture That Makes This Work

The enterprises extracting real value from reasoning models are not deploying them uniformly. They are building routing architectures that match reasoning depth to task complexity.

This routing layer sits between task input and model selection, directing routine queries to fast standard inference and complex queries to reasoning models. The design questions that define it: Which queries in your workflow involve genuine complexity — multiple constraints, cross-document analysis, high-stakes correctness requirements? What is the measurable cost of a wrong answer on different task types? What latency does each step in the workflow actually require?

The answers produce explicit routing criteria — not another AI model deciding which model to use, but deterministic rules based on task classification. The orchestration trap in multi-agent AI is instructive here: adding complexity to the routing layer itself creates new failure points. The routing logic should be testable, auditable, and based on defined task properties rather than emergent from system behavior.

The agent governance stack principle applies directly: governance mechanisms that determine which decisions warrant deeper reasoning need to be designed explicitly and maintained actively. As task distributions shift — new workflows, new risk profiles, new cost thresholds — the routing architecture needs to evolve with them.

Your AI architecture should match model cost to decision stakes.

Most enterprises are either overspending on reasoning models applied to routine tasks or underusing them on decisions where quality gaps are costing real money. Talk to ViviScape about building AI architectures that route correctly.

Three Failure Patterns to Avoid

Uniform deployment. Organizations that apply reasoning models to all queries discover budget overruns before they discover quality improvements. The improvements are real on complex tasks. They do not justify the premium on routine ones. Audit your query distribution before committing to reasoning-first architecture.

Reasoning as a substitute for architecture. Some teams treat reasoning models as a fix for poorly structured inputs or inadequate data preparation. A poorly framed query processed by a reasoning model produces a better answer than the same query on a standard model — but not as good as a well-structured query on standard inference, and often at 5 to 10 times the cost. The right investment sequence is structured inputs first, reasoning models second. The data debt problem does not resolve itself by adding a more expensive model on top of it.

Ignoring latency for synchronous workflows. Reasoning models perform well in asynchronous workflows — batch analysis, overnight processing, queued review tasks — where users accept a brief wait. They create poor experiences in synchronous conversational workflows expecting sub-second response. Deploying reasoning models in chat interfaces or real-time decision tools without designing for latency produces adoption failures that get attributed to the technology rather than the deployment architecture.

The Bottom Line

Reasoning models represent a genuine capability advance for a specific category of enterprise work. The category is real, the quality improvements are measurable, and the cost premium is justified at the right task profile. The mistake is treating reasoning capability as a universal upgrade rather than a targeted tool.

The model providers are expanding the reasoning spectrum rapidly — more effort levels, finer cost-quality tradeoffs, domain-specialized reasoning variants. Enterprises that build routing architectures now will be positioned to take advantage of this expanding capability as it matures. Those that deploy uniformly will continue discovering that capability improvements translate into cost escalations before they translate into value.

The enterprise AI decision is no longer just which model to use. It is which model to use for which decision. That architectural question is where the value — and the risk — now lives.

AI architecture is where cost efficiency and capability meet.

ViviScape designs enterprise AI systems that route reasoning depth to where it matters — structured inputs, intelligent task routing, and model selection matched to decision stakes. Not AI that maximizes capability. AI that maximizes value. Schedule a consultation to assess your current AI architecture.

Schedule a Free Consultation