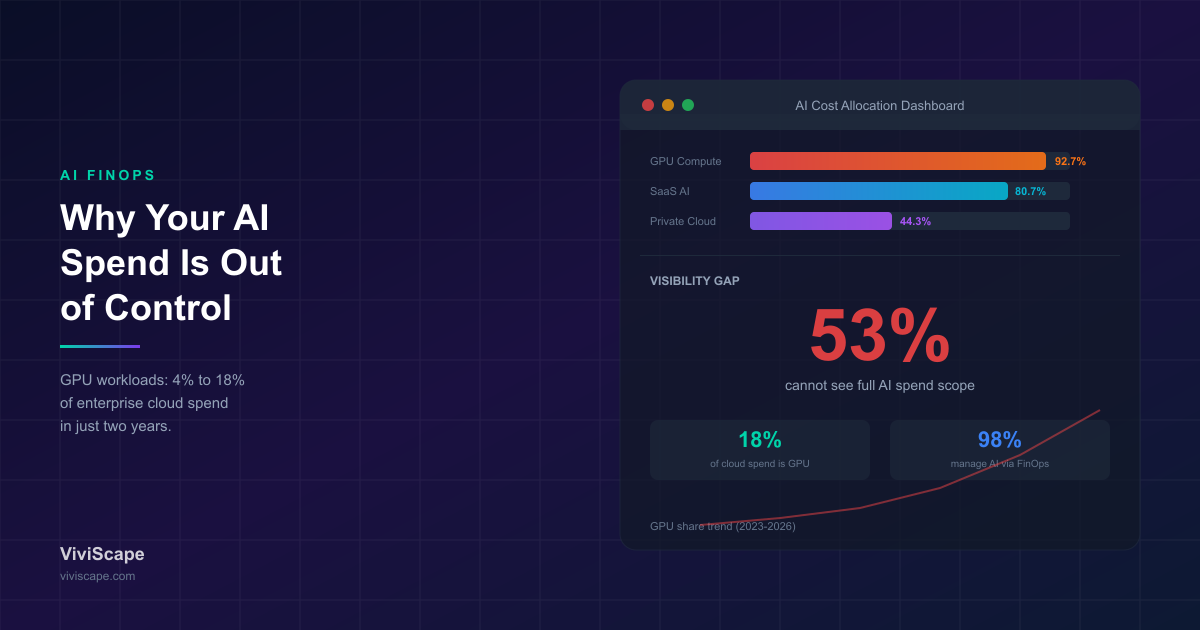

- 98% of organizations now manage AI spend through FinOps (up from 31% two years ago), yet 53.4% cannot see the full scope of what they are spending on AI. GPU workloads now account for 18% of cloud spend, up from 4% in 2023.

- AI spend behaves differently than traditional cloud spend: variable, model-dependent, distributed across public cloud (92.7%), SaaS AI (80.7%), private cloud (44.3%), and data center (42.9%). SaaS AI is fastest-growing and hardest to track.

- Shift-left economics is the defining 2026 practice: introduce cost modeling before deployment, not after. Once models are trained, pipelines deployed, and embeddings populated, the cost structure is locked in.

- 40% of organizations cannot quantify AI value; 39% struggle with equitable cost allocation. By 2027, G1000 firms face up to a 30% rise in underestimated AI infrastructure costs unless observability is built in from day one.

Two years ago, 31 percent of organizations managed AI spend through their FinOps practice. Today, that number is 98 percent. GPU-intensive workloads now account for 18 percent of total cloud spend at AI-forward enterprises — up from 4 percent in 2023. AI cost management has become the single most desired skillset across organizations of all sizes.

And yet, 53.4 percent of those same organizations say their biggest barrier is understanding the full scope of what they are actually spending on AI.

This is the AI FinOps paradox: nearly everyone is managing AI costs, and almost nobody can see them clearly. The money is moving faster than the visibility. And without visibility, cost optimization is just guesswork with a dashboard.

The Invisible Spend Problem

The AI ROI Reckoning established that most enterprises cannot demonstrate returns from their AI investments. The FinOps data reveals why: you cannot measure returns on spending you cannot see.

AI spend is fundamentally different from traditional cloud spend. It is variable, model-dependent, usage-driven, and distributed across infrastructure layers that existing cost allocation tools were never designed to track. A single AI workflow can consume compute from GPU clusters, storage from vector databases, bandwidth from API calls, and licensing from multiple model providers — all in a single request cycle.

Forty percent of organizations cannot quantify AI value. Thirty-nine percent struggle with equitable cost allocation — determining which team, product, or initiative should bear the cost of shared AI infrastructure. These are not measurement failures. They are architectural failures. The AI was deployed without the cost observability layer that makes management possible.

By 2027, G1000 organizations face up to a 30 percent rise in underestimated AI infrastructure costs. The underestimation is not because leaders are ignoring AI costs. It is because AI costs behave differently than the workloads their forecasting models were built to predict.

Where the Money Actually Goes

The State of FinOps 2026 report — representing 1,192 respondents managing over 83 billion dollars in annual cloud spend — reveals how enterprise AI investment distributes across infrastructure:

- Public cloud: 92.7 percent of AI investment

- SaaS-based AI: 80.7 percent, up from 69.4 percent

- Private cloud: 44.3 percent

- Data center: 42.9 percent

The multi-destination pattern is the problem. AI spend is not a single line item in a single cloud bill. It is distributed across public cloud compute, SaaS subscriptions, private infrastructure, and increasingly, sovereign deployments that require regional infrastructure. Each destination has its own pricing model, its own metering, and its own blind spots.

SaaS-based AI is the fastest-growing category — and the hardest to track. When a team subscribes to an AI-powered analytics tool, a coding assistant, and a document processing service, each with its own per-seat or per-token pricing, the cumulative AI spend is invisible to traditional cloud FinOps. The 80.7 percent figure means that for most enterprises, a significant portion of AI spend lives outside the cloud cost management tools they already have.

The Shift-Left Imperative

The most significant finding from the 2026 FinOps landscape is not about optimization. It is about timing.

Pre-deployment architecture costing — the ability to understand the financial impact of an AI system before it is provisioned — emerged as the top desired tool capability that does not yet exist. Practitioners want financial context introduced before infrastructure is deployed, not after the bill arrives.

This is the "shift-left" principle applied to AI economics: move cost decisions earlier in the development lifecycle, when architectural choices still have leverage. Once a model is trained on a specific GPU cluster, once an inference pipeline is deployed across regions, once a vector database is populated with embeddings — the cost structure is locked in. Optimizing after deployment is rearranging deck chairs.

Do you know what your AI systems actually cost to run?

If the honest answer is no, you are in the majority — but that is not a safe place to be. Talk to ViviScape about building AI architecture with cost observability and governance built in from day one.

The shift-left approach requires a fundamental change in how AI projects are planned. Every AI initiative should include a cost model alongside the capability model — not as an afterthought, but as a design constraint. What does this workflow cost per transaction? How does cost scale with usage? What happens to the economics when you need to run it in multiple regions for data sovereignty compliance?

Organizations that cannot answer these questions before deployment will answer them after — when the options are limited and the spend is already committed.

From Cost Management to Value Management

The FinOps discipline is undergoing a strategic transformation that directly affects how enterprises should think about AI economics.

Seventy-eight percent of FinOps teams now report to the CTO or CIO — up 18 percent from 2023. CFO reporting has declined to 8 percent. This organizational shift reflects a deeper change: FinOps is evolving from a cost-reporting function into a strategic decision-support system for technology investment.

The "shift-up" movement means FinOps leaders now participate in provider negotiations, commitment modeling, and even mergers and acquisitions discussions. Organizations with executive-level FinOps alignment demonstrate two to four times greater influence over technology selection decisions.

For AI specifically, this means the conversation is moving from "how do we reduce our GPU bill?" to "how do we ensure our AI investments create proportional business value?" That is a fundamentally different question — and it requires different tools, different metrics, and different organizational authority.

The 58 percent of organizations that prioritize workload optimization report diminishing returns. The big efficiency wins have been captured. The next wave of impact comes from governing and shaping spend before it happens — making cost-aware decisions at the architecture level, not the invoice level.

The Lean Team Problem

Even at the highest spend levels — organizations managing over 100 million dollars in annual cloud spend — FinOps teams remain remarkably lean: 8 to 10 practitioners with 3 to 10 contractors. These small teams are now responsible for managing AI spend that is growing at multiples of traditional cloud growth, across infrastructure types their tools were not designed to monitor.

Meanwhile, 46.5 percent of organizations must deploy applications 50 to 100 percent faster than they did three years ago. The speed pressure means AI workloads are being provisioned faster than cost governance can evaluate them — creating the exact visibility gap that 53 percent of organizations are struggling with.

This is not a staffing problem that can be solved by hiring more analysts. It is an architecture problem. AI cost governance must be automated, embedded in deployment pipelines, and enforced through policy — not through manual review of bills that arrive weeks after the spend occurred.

The agent governance stack provides a useful parallel: just as autonomous agents require runtime policy enforcement to operate safely, AI workloads require runtime cost governance to operate efficiently. The enforcement must happen at machine speed, at the point of deployment, before the cost is committed.

Five Practices That Actually Work

Based on the FinOps 2026 data and the organizations that are successfully managing AI costs, five practices distinguish leaders from laggards:

1. Tag Everything at the Model Level

Traditional cloud tagging tracks compute instances and storage volumes. AI FinOps requires tagging at the model level — which model, which version, which use case, which business unit. Without model-level attribution, you cannot connect AI costs to AI outcomes.

2. Forecast by Workload Pattern, Not by Historical Spend

AI cost patterns do not follow the linear extrapolation that works for traditional cloud. A new model deployment, a change in inference batch size, or a shift in user adoption can change costs by orders of magnitude. Forecast based on workload characteristics — tokens processed, inference calls, training cycles — not on last month's bill.

3. Establish Cost Guardrails Before Deployment

Set maximum cost thresholds for AI workloads as part of the deployment approval process. If a projected AI system will cost more than the business value it generates, that should be discovered during architecture review, not during quarterly budget reconciliation.

4. Consolidate SaaS AI Visibility

The 80.7 percent SaaS AI adoption rate means a significant portion of your AI spend is invisible to cloud FinOps tools. Build a unified view that includes SaaS subscriptions, API-based AI services, and embedded AI features alongside infrastructure costs.

5. Align FinOps with AI Governance

The organizations managing AI costs most effectively are the ones where FinOps, AI governance, and platform engineering operate as coordinated functions — not as separate teams that occasionally share spreadsheets. Cost governance is a governance problem, not just a finance problem.

The Bottom Line

The AI cost crisis is not about spending too much. It is about spending blind. Enterprises are investing more in AI than ever — and the investment is justified. The problem is that most organizations cannot see where the money goes, cannot attribute costs to outcomes, and cannot make cost-aware decisions before the spend is committed.

The AI ROI Reckoning asked whether AI investments are producing returns. AI FinOps asks a more fundamental question: do you even know what those investments cost?

Ninety-eight percent of organizations now manage AI spend. Fifty-three percent cannot see the full scope. That gap is where the next round of AI budget overruns, failed ROI calculations, and unpleasant board conversations will originate.

Close the visibility gap first. Then optimize. The order matters.

ViviScape builds AI systems with cost observability and governance embedded from the architecture level — not bolted on after the bill arrives. If your AI spend has outgrown your ability to manage it, let's build the visibility layer you need.

Is your AI spend outpacing your visibility?

ViviScape builds AI architecture with cost observability and governance built in from day one — so you can see where every dollar goes before it is committed.

Book a Free Consultation